Where You Find Hate on Social Media

By Arushi Parikh and Christine Ong

Published February 23, 2026

Where You Find Hate on Social Media

“It was about how immigrant families should be separated. And I’m from an immigrant family, so I felt like they were saying it should happen to all of us,”

– UCLA Undergraduate Student

This undergraduate’s words, gathered as part of a larger study exploring how hate is spread on social media, captures a pattern echoed across many survey responses: hate speech does not feel distant, it feels personal. With every scroll, reel, and comment, students risk encountering content that targets who they are and questions their humanity.

Prior research shows that social media platform designs play a key role in amplifying hate. Algorithmic recommendation systems prioritize emotionally charged and polarizing content, while features such as reposting, commenting, and short-form video, which encourage rapid circulation with limited context or accountability. Scholars have noted that these affordances can normalize harmful speech by embedding it within entertainment, humor, or trending content, making it harder for users—especially young users—to disengage from or challenge it.

In spring 2024, we piloted a survey with a representative sample of UCLA undergraduates, with a total of 134responses across years. Clear majorities of undergraduates responding to the survey had observed hate speech in the last month (80%) (SMASH). The most common “type” or target for hate speech on social media observed by undergraduates in the last month was related to race and ethnicity, gender, and sexual orientation. Additionally, ninety-five percent responded “yes” to encountering multiple types of hate speech. More than half of the participants responded “yes” to five or more types, indicating that many experienced a steady stream of hate through social media in the last month. We found similar results when administering the survey a year later.

But what exactly did undergraduates encounter and where? To learn more, we added a question to the 2025 survey, asking respondents to “Think about the last time you saw hate speech on social media. In a few words, please tell us… Where did you see it? What app? What made it hate speech to you?” One hundred undergrads answered this question and we created tallies of specific apps that were mentioned and the types of targets for hate speech.

Sources of Hate Speech

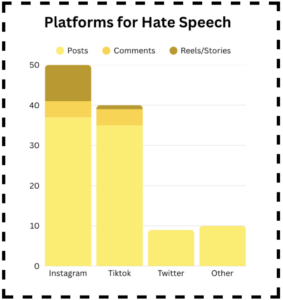

Instagram emerged as the leading source (45 tallies or 45%), followed by TikTok (36%), Twitter/X (9%), and other platforms, including Facebook, YouTube, Reddit, Pinterest, and Yik Yak (10%). Notably, some participants also identified news sources and pointed to gaming platforms as sources of hate. For instance, one student, when asked where they saw hate speech said, “I saw news clips of politicians spreading hate speech on Reddit.” This suggests that students encounter harmful content across a wide range of digital environments, not just select social media platforms.

Some survey respondents went further, noting particular Instagram and TikTok features where they found hate, specifically posts, comment sections, reels, and stories. According to these results, an Instagram reel is a hot spot for hate. Reels, stories, and comment sections invite interaction, but they also create conditions where hate can spread quickly—allowing users to respond, repost, and build upon harmful content in highly visible ways.

Types of Hate Speech

When participants were asked to explain what made the content they saw “hate speech” certain themes stood out. Our word cloud and coding analysis showed that racism directed toward marginalized groups was the most common type of hate speech reported. Other major categories included discrimination and stereotyping, antisemitism, misogyny, body-shaming, religious intolerance, slurs, ableism, homophobia, and the spread of harmful misinformation.

For example, one undergraduate explained, “It was hate speech because it was targeting a minority population and was invalidating their experiences and calling them slurs.” Another participant stated: “It was targeted to a specific group and demeaned them and made them seem less than human.” Another student associated humor as a form of hate speech, specifically body-shaming by saying “Comments mocking someone’s body type by saying you have to keep the food away from them otherwise they will eat it all.” These answers help to illustrate that hate speech is sometimes subtle and hidden, but it can also be explicit, aggressive, and deeply dehumanizing.

Reactions

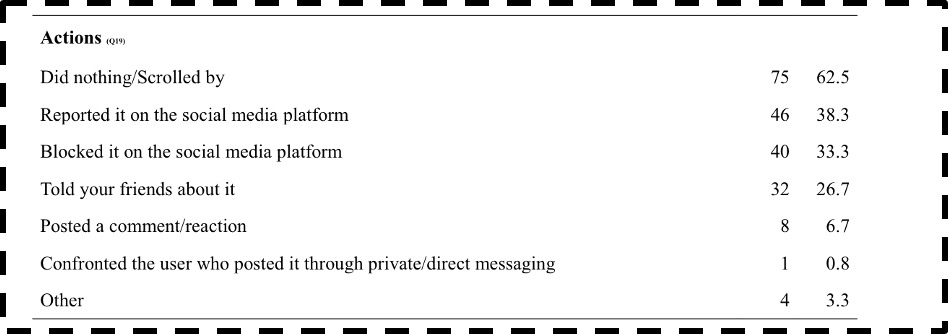

Our survey results indicate that undergraduates commonly encounter hate speech on mainstream, youth-centered platforms like Instagram and TikTok. When asked about their reaction to the last hate speech encounter, a majority (63%) of respondents reported that they “did nothing, scrolled by”. Smaller but sizeable percentages reported the post to the platform (38%) and/or blocked it (Individuals were able to select more than one option). Exploring why the majority of survey respondents reported scrolling by requires further investigation. Why? It may be because they don’t know what to do, they are scared of what might happen if they do block or report, don’t feel it is worth their time, or they believe that their actions won’t make a difference.

This hesitation highlights the need for more guidance, support systems, and educational programs that teach and empower individuals to respond safely and effectively to hate speech. The prevalence of hate speech in environments designed for increasing communication and building relationships is very concerning. One example of this is the appearance of biased news sources as a source of hate, which makes me question the responsibility of media outlets to maintain accuracy and respect for marginalized communities. When news sources themselves are flagged for hate, the credibility of their information and the legitimacy of their brand may be compromised, making it even harder for young audiences to distinguish between fact and misinformation.

Where Might We Go From Here?

- Increase awareness of potential Hate Hot-Spots: Reels, stories, and comments allow for interactions which may produce more hate and become “hate hot-spots” as others can respond, relate, repost, and build upon this hate in a visible context.

- There is a large lack of willingness to report hate speech. Even though students recognize hate speech, many reportedly scrolled by. More research is needed to understand why–it might be because they don’t want to, they are scared of what might happen if they do, or if they believe that their actions won’t make a difference.

- More reporting: Platforms should make the reporting process more accessible and effective, so students feel that their actions have an actual impact. This might encourage them to take action.

- Community-based solutions: Through SMASH and other ISH initiatives, we can have more peer-led discussions, workshops, social media literacy programs, or campaigns so students feel more comfortable taking action.

Bibliography:

1) SMASH Program. SMASH Undergraduate Survey 2025. 2025, https://seis.ucla.edu/smash-project/.

2) Pew Research Center: Teens, social media & technology 2018, https://www.pewresearch.org/internet/2018/05/31/teens-social-media-technology- 2018/, last accessed 2023/12/27

3) Christie, C., Gilliland, A., Ong, C., et al. (2024, December 20). The rise of social media hate. UCLA Initiative to Study Hate. https://studyofhate.ucla.edu/smash-social-media-hate/